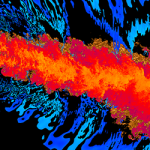

Protons, specifically proton beams, are increasingly being used to treat cancer with more precision. To plan for proton treatment, X-ray computed tomography (X-ray CT) is typically used to produce an image of the tumor site — a process that involves…